Optical character recognition is no longer a backstage utility that turns scans into text. In 2026 it sits at the center of how organizations understand documents, route work, and keep data trustworthy. If you came looking for OCR Technology in 2026: Biggest Trends You Can’t Ignore, you’ll find them here, stripped of buzzwords and focused on what actually changes day-to-day work. The short version: OCR is growing up from reading letters to reasoning about documents.

From reading text to understanding documents

The biggest shift is architectural. Transformer-based models that treat layout as a first-class signal now dominate, blending vision and language in one pass. Open research like LayoutLMv3, Donut, TrOCR, and text-aware vision encoders feed commercial systems that extract entities, tables, and relationships without brittle templates. You don’t just get text; you get a structured slice of the document and context around it.

That structure now connects to downstream reasoning. LLMs orchestrate extraction, validation, and summarization, turning OCR output into line items, compliance notes, or support tickets. In practice, that means invoices mapped straight into your ERP schema and contracts flagged for unusual clauses before anyone opens the PDF.

In my consulting work with a regional distributor, moving from legacy OCR to a layout-aware pipeline cut exception handling by a third. The technical leap wasn’t flashy—just a model swap and schema mapping—but it let the team redirect hours from retyping to negotiating better terms with suppliers.

Why this shift matters

Accuracy alone isn’t the headline; reliability is. Systems that understand structure fail more gracefully, expose confidence per field, and make audit trails feasible. That makes procurement, claims, and onboarding flows faster and safer without a fragile pile of regex rules.

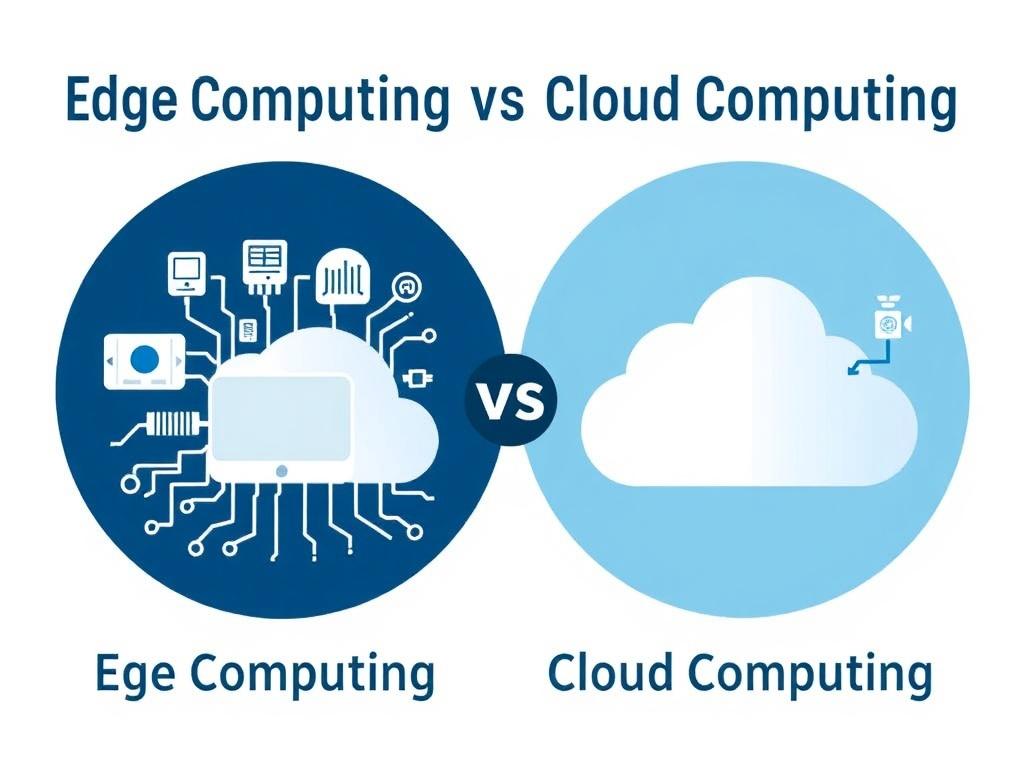

Edge and on-device OCR takes the lead

With NPUs now common in phones and laptops, on-device OCR has crossed from niche to normal. Apple’s Neural Engine, Qualcomm’s Hexagon, and Intel’s NPU hardware run compact vision-language models at the edge, slashing latency and keeping sensitive data local. For field teams scanning labels in a warehouse or nurses capturing charts bedside, sub-200 ms feedback changes the cadence of work.

Privacy is the second pillar. Many healthcare and finance workflows limit cloud transmission, and on-device processing solves that without sacrificing quality. Teams still sync models and aggregate insights, but raw images of IDs, medical notes, or pay stubs never leave the device—a relief for compliance and a trust signal to customers.

Handwriting, low-resource languages, and messy inputs get their turn

Self-supervised pretraining and synthetic data are pushing handwriting recognition past the awkward middle ground where only neat printing worked. Transformers trained on strokes, layout, and text context now read physician notes, delivery signatures, and classroom whiteboards with fewer blind spots. It isn’t perfect, but it’s reliably useful, which is new.

Coverage across scripts is improving too. Script detection routes pages to specialized decoders for Devanagari, Arabic, Thai, and mixed-language documents that once flummoxed systems. For global teams, that means one pipeline for multilingual receipts and identity documents instead of a patchwork of vendors.

Image quality no longer blocks progress the way it did. Diffusion-based denoising, deblurring, and super-resolution recover legible text from low-light phone captures and fax-era scans. Postal addresses on crumpled parcels and thermal receipts from gas stations are finally first-class citizens.

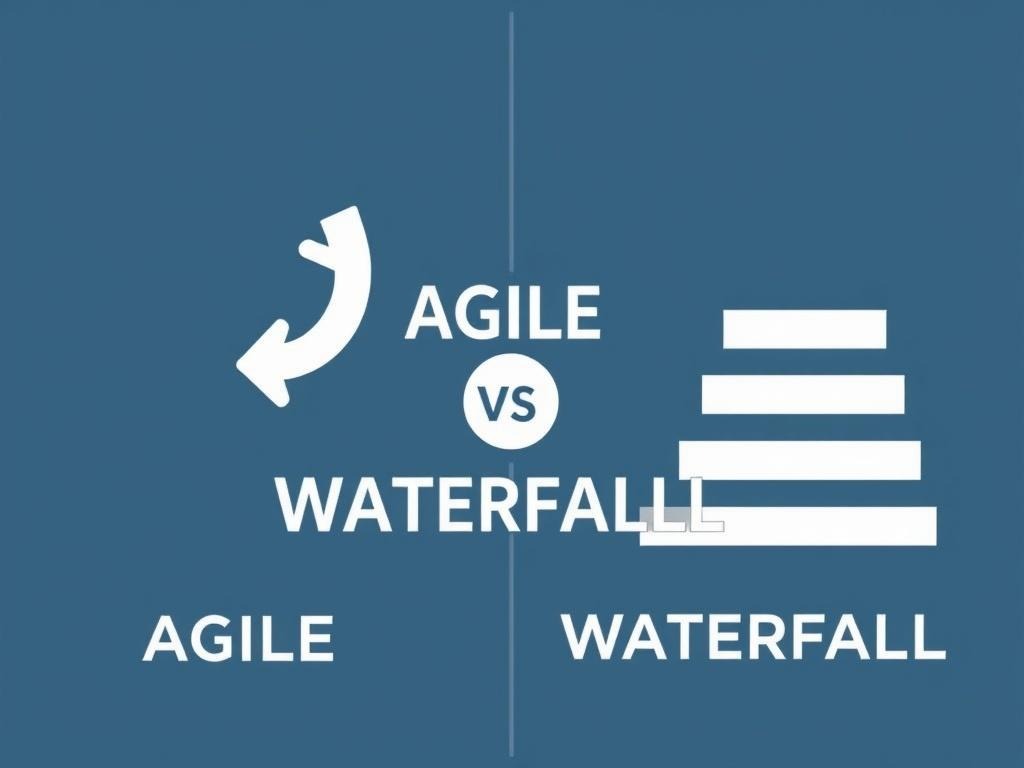

From PDFs to APIs: structured outputs that drive automation

The shape of the output matters. Modern engines return typed JSON with bounding boxes, reading order, tables as cell grids, and even implied relationships like header-body links. Confidence scores per token and per field make human-in-the-loop review rational: verify the 12 percent of items below threshold and let the rest flow straight into systems.

That structure unlocks orchestration with RPA and business rules. A claims bot can triage cases by detected severity terms and missing pages; a finance flow can validate VAT IDs against registries before posting. The move from “a text blob” to “a dependable schema” is what turns OCR from cost line to throughput multiplier.

| Feature | Cloud OCR (2026) | On-device OCR (2026) |

|---|---|---|

| Latency | 100–800 ms, network-dependent | 50–200 ms, consistent |

| Privacy | Data leaves device unless special setup | Data stays local by default |

| Cost predictability | Per-page or per-token fees | Upfront device cost; low marginal cost |

| Offline support | Limited | Native |

| Model updates | Instant, vendor managed | Versioned, pushed to fleet |

Security, trust, and compliance move to the front

OCR now touches identity, health, and finance, so governance can’t be an afterthought. Mature platforms log who processed what, when, on which model version, and with which confidence thresholds—crucial for audits and incident response. Built-in redaction of PII, data residency controls, and role-based access are becoming table stakes in regulated sectors.

Trust also means robustness against fraud. Engines are learning to spot tampering, synthetic text, and mismatched fonts on IDs and pay slips, and to verify digital seals where available. Fairness testing across languages and document types is starting to appear in RFPs; vendors need to show performance by cohort, not just a single headline number.

Emerging edges you’ll actually use

Real-time translation overlays for signage and packaging are cleaner and less jittery thanks to better tracking and on-device models. Chart and table understanding goes beyond cell extraction to semantic labeling—detecting that a line jumps at a policy change and pulling that context into a summary. STEM-heavy teams benefit from improved formula recognition, turning whiteboard math into LaTeX without a weekend of cleanup.

For customer support, OCR pairs neatly with retrieval: scan a manual, link sections to a knowledge base, and surface answers inside chat. In retail, shelf checks mix OCR with barcode and price tag layout to catch mislabels before customers do. Small wins, repeated daily, are where the value piles up.

A quick buyer’s checklist for this year

If you’re shortlisting vendors, a simple checklist keeps demos honest and keeps the focus on outcomes rather than sizzle.

- Measure accuracy by field and document type, not just overall word error rate.

- Demand per-field confidence and easy routing to human review below thresholds.

- Test latency on your real devices and networks, including offline modes.

- Inspect the JSON schema: does it model tables, reading order, and page structure?

- Verify security posture: audit logs, data residency, redaction, and model versioning.

- Run a total cost model for your volume: per-page fees vs. on-device deployment.

What this adds up to

OCR Technology in 2026: Biggest Trends You Can’t Ignore isn’t a list of buzzwords; it’s a pattern. Models that understand layout, run close to the camera, and speak the language of your systems turn documents into data you can trust. The teams that win won’t be the ones with the flashiest demo—they’ll be the ones that quietly wire this capability into the boring, valuable parts of the business and let the throughput speak for itself.